What are Distributed CACHES and how do they manage DATA CONSISTENCY?

Комментарии:

Gaurav nice video. One comment. Writeback cache refers to writing to cache first and then the update gets propagated to db asynchronously from cache. What you're describing as writeback is actually write-through, since in write through, order of writing (to db or cache first) doesn't matter.

Ответить

Gaurav - One question here , i got about Write through and Write Back , what about the Read through and Read Back

Ответить

All the way explained here irrespective of order of writing into DB or cache are write thought caching. In Write back cache is only updated and DB is not part of the I/O completion. DB is updated later in background. @gaurav Sen

Ответить

What is kadka and where it used here ?

Ответить

thank you so much 😌

Ответить

Many relevant issues were discussed, but a resource with intent to educate should not have incorrect facts (wrong terminology in this case: write-through, write-around, write-back all mixed up). It causes more harm than good. I hope this is withdrawn and a new video is created.

Ответить

Notes:

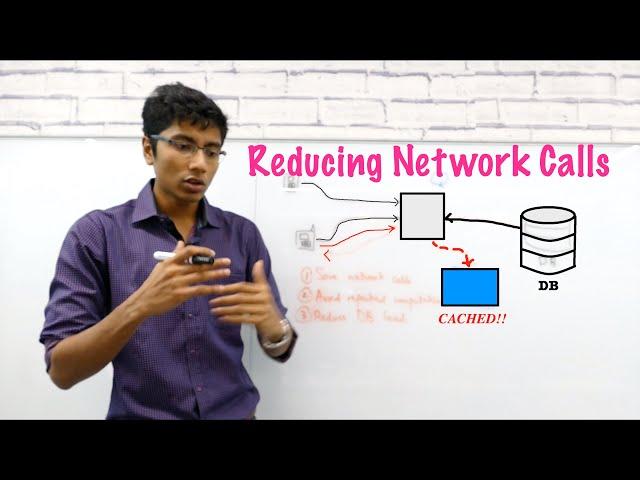

In Memory Caching

- Save memory cost - For commonly accessed data

- Avoid Re-computation - For frequent computation like finding average age

- Reduce DB Load - Hit cache before querying DB

Drawbacks of Cache

- Hardware (SSD) much more expensive than DB

- As we store more data on cache, search time increases (counter productive)

Design

- Database (Infinite information) vs Cache (Relevant information)

Cache Policy

- Least Recently Used (LRU) - Top entires are recent entries, remove least recently used entries in cache

Issue with caches

- Extra calls - When we couldn’t find entry in cache, we query from database.

- Threshing - Input and output cache without ever using results

- Consistency - When update DB, we must maintain consistency between cache and DB

Where to place the cache

- Close to server (in memory)

- Benefit - Fast

- Issue - Maintaining consistency between memory of different servers, especially for sensitive data such as password

- Close to DB (global cache, i.e. Redis)

- Benefit - Accurate, Able to scale independently

Write-through vs Write-back

- Write-through - Update cache, before updating DB

- Not possible for multiple servers

- Write-back - Update DB, before updating cache

- Issue: Performance - When we update the DB, and we keep updating the cache based on that, much of the data in the cache will be fine and invalidating them will be expensive

- Hybrid

- Any update first write to cache

- After a while, persist entries in bulk to database

I love your word lingos ..😂😂

The least frequently used is not being frequently used in real world ...damn u are the funniest online educator with great content

Where did you got that white board ?

Ответить

This everything what I needed. I am really looking forward to learn that how can create an online game hosting server . I researched a lot on how do it and I didn't get it what is exactly happening. Your CDN video was really good 👍. Now I have understood how exactly CDN works and why it uses distributed caching 👍💯

Ответить

Cache are in-memory, so RAM not SSD

Ответить

Hey! Thanks for the video.

One question: If what pinned comment says is right, then, what would be the reason for Write-Back cache to be expensive?

Also, if what pinned comment says is right, then, we won't be able to use Write-Back mechanism where data is critical ( like financial, and passwords ) since DB is going to be updated asynchronously, and it could lead to inconsistencies, right ?

Please answer these. Thanks!

How exactly do you benefit from reduced network calls by having a cache server/distributed cache servers?

Aren't you still going to be making network calls to these cache servers? The only benefit I see is that cache will make use of in-memory data storage. What am I missing?

Please make a full series in Redis or Paid Course.

Ответить

Thanks

Ответить

Solid explanation

Ответить

Hi, can you please do a video on ncache as well

Ответить

Wouldn't it make sense to put a load balancer in front of the distributed cache? And, then also in front of the DB? Trying to figure out why most architectures will put a load balancer in some layers, but not others. Like it seems that as long as we have a distributed/replicated component, then we would need to have an LB in front of it. Maybe some diagrams don't include it because some components have load balancing as a part of it; for example, an API gateway could be doing its own load balancing to know which service node to go to.

Ответить

Anyone caching passwords? Please let me know if you do

Ответить

Bhai. u r a life saver! Brilliant tutoring. Thank you!

Ответить

Great video👏

Ответить

I couldnt get info on Distributed Cache.

Ответить

redis that you mentioned here is a redis cache server or redis database?

Ответить

Hi

Good

Can you show that you have implemented

Thanks

Nice Explanation Gaurav. This video covers basics of caching. In one of the interviews, I was asked to design the Caching System for stream of objects having validity. Is it possible for you to make some video on this system design topic?

Ответить

I think you mixed up write-through and write-back.

- write-through - the transaction is held until data is at rest (in DB). If transaction completed, data is persistently stored.

- write-back - the transaction completes (from user POV) as soon as cache is updated, DB update happens later. If cache fails, data may be lost.

Explained like my interviewed candidate today.

Ответить

These videos are fantastic, thanks for making them!

But, I’m having trouble with one part of the video. In the example where you’re describing the differences between write-through and write-back, you mention that write-through isn’t viable if the value of x changes and is only updated in one servers in-memory cache - makes sense.

But, how does write-back solve that problem?

Server 1 performing a write-back is just updating the DB and evicting x in their own cache, but it doesn’t invalidate/evict x from another server’s (Server 2’s) cache. So, it seems to me that if S1 updates X with a write-back policy, and S2 reads X, there’s still opportunity for inconsistency.

Hope that makes sense and would love to get your (or anyones) feedback!

The comment section tho oof

Ответить

wonderfully explained. thanks

Ответить

Please make a

video on this topic "Design Peer to Peer network".

Data is not consistent any time with use of cache, you might get older data, I dont understand why we need to use it if it is not latest data as it might cause issues and clients may report

Ответить

Around 3.30, listed in the PROs of having Cache system. Can someone explain how network calls are being reduced. In real time scenarios, how can we reduce network calls because we are anyway pinging DB for information.

Ответить

great video,very helpful to learn english

Ответить

Great explanation

Ответить

So good!

Ответить

Hi Gaurav. Thank you so much for the video. I have one question tho. Say we have multiple caching instances, what tools can we use to distribute the calls from the web services to these global cache instances. One thing I have in mind is load balancer, but can load balancer be used in this scenario?

Ответить

Hi Guys, I am new to Redis Concept. I got a sudden requirement from client in one of my new service.

The Service is developed in Ruby technology. And The database it is using is EDW (snowflake). For cache purpose it is using Redis.

Now they are expecting me to do migrate from one Redis to another Redis.

Can you please someone help me what exactly that means?..

It's like computer organization

Ответить

Misleading title. where is the distributed part?

Ответить

Teaching and learning are processes. Gaurav makes it fun to learn about stuff, then let it be systems or the egg dropping problem.

I might just take the InterviewReady course to participate in the interactive sessions.

Take a bow!

Amazing Explanation!! Thanks!!

Ответить

<3

Ответить

I was squinting my eyes to look at the white board lol. Awesome video nonethelesss.

Ответить

learned a ton in this video thanks so much

Ответить

You didnt mentioned that with distributed cach , you can make microservices stateless!

Ответить