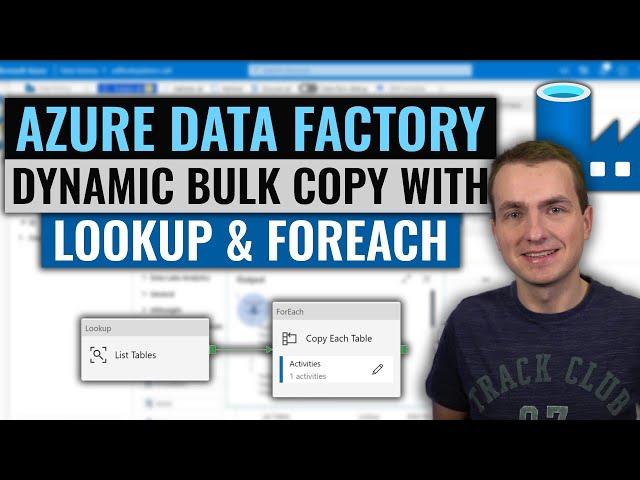

Azure Data Factory | Copy multiple tables in Bulk with Lookup & ForEach

Комментарии:

Thanks!

Ответить

I did that with ms sql but i have an issue when i add new columns in the source, even when over sink y put a pre query to drop table, the issue is refer to new columns doesn't exist in the sink table

,please someone could help me?

Thanks, this is beautiful.

Ответить

Very good explanation! I will try to read a list of tables but not export but do mask certain columns. I guess i have to use a derved column inside the for each loop maybe. Three parameters: schema_name, table_name and column_name. But how to make: update <schema_name>.<table_name> set <column_name> = sh2(<column_name>) where Key in (select Key from othertable) in a derived column Name context?

Ответить

Can we export only top 1000 rows from each table?

Ответить

Can we do the same activities on various lists in a sharepoint site?

Ответить

Hi Adam, how to ingest excel file from container to Synapse table ?

Ответить

This video was really helpful! you have leveled up my Azure skills, Thank you sir, you have gained another subscriber

Ответить

THANK YOU SO MUCH for this! The step-by-step really helped with what I needed to do.

Ответить

It seems that when LookUp and Foreach do not work together when LookUp is bonded to one linked Service and Dataset and ForEach to another...(LookUp is on Azure SQL Server and ForEach is on another On Premise Server)

Ответить

That's not really what I am looking for Copy tables from db1 to db2 (different servers) based on list source/destination.

Ответить

Brilliant teaching style Adam. Very watchable. I particularly like how you explain the background. I've subscribed and will watch more of your videos.

Ответить

awesome

Ответить

Greate one!

Ответить

Very Good

Ответить

Awsome adam there cant be a way to explain better than this

Ответить

Thank you so much for this. It helped a lot

Ответить

Amazingly simple and informative!

Ответить

Hi Adam, thanks for the video! I am wondering if it is possible specify just one row from the table by id and copy it? Thanks in advance!

Ответить

Thanks for the video, that's a great one.

I have a question like, This pipeline will run on all the tables or some tables according to our logic, but what if we add new tables to the data source and if we run this pipeline again it will run over all the tables again rt? how can we run only on newly added tables, not on the ones that already exist on target?

Hope you can help me.

Thank you

Thank you! well done.

Ответить

Videos are very much clear to the people who would like to learn and practice.Thanks alot.your hard work is appreciated.

Ответить

Wow this was explained so well. Thank you!!!

Ответить

Great Explanation !!!!

Ответить

Excellent, Excellent video. This has truly cemented the concepts and processes you are explaining in my brain. You are awesome, Adam!

Ответить

What a wonderful content you have place in social media.. What a world class personality you... People certainly fall in love with your teaching..

Ответить

it was so perfect , I was able to follow and copy data in first attempt .thanks

Ответить

The way you explain is super Adam. Really nice

Ответить

Very well explained. Thank you so much!

Ответить

Awesome video 👍 my requirement is the other way around, how can I copy from ADLS to Azure SQL, i have multiple files in multiple folders in ADLS storage account?

Ответить

Thank you for the great information. I wonder how much of this gui-based development can be replaced by code only. In my experience it is easier to troubleshoot a thousand lines of code than gui settings across 50 forms.

Ответить

I was looking for this video. Thanks for making this. It helps a lot. Thanks again.

Ответить

You are very good 👍 explained well thanks 😊

Ответить

Thanks Adam for wonderful lectures. I am facing error while executing command to fetch all the table details using lookup activity in ADF.

A database operation failed with the following error: 'Reference to database and/or server name in '<<database_name>>.INFORMATION_SCHEMA.TABLES' is not supported in this version of SQL Server.'

Reference to database and/or server name in '<<database_name>>.INFORMATION_SCHEMA.TABLES' is not supported in this version of SQL Server., SqlErrorNumber=40515,Class=15,State=1,

Could you please suggest on this?

👍👍👍 very good explanation.. 👍👍.

Ответить

You are a legend. Next level editing and explanation

Ответить

I want to copy mysql db to azure sql db with all tables with constraints, data . Could you please help me

Ответить

Hi Adam, I really appreciate you video. Thanks for your videos! I hope you can also create a video for ODBC as data source.

Ответить

Thank you.

Ответить

Amazing explanation Adam! Thank you for this! qq- Can the For Each activity run things concurrently? i.e. in this example, can it pass the 3 table_name, schema_name values to the Copy Data activity at the same time?

Ответить

Thank you Adam! I had been trying to follow some other written content to do exactly what you showed with no success. Your precise steps and explanation of the process were so helpful. I am successful now.

Ответить

Is it possible to validate different date format from different source file in copy activity before inserting into one sink table.

Ответить

thanks for the video

Ответить

very simple yet powerful explanation

Ответить

Awesome vedio Adam!

Can we use ms access db as source data also?

Thank you! I really appreciate all you share, it truly helps me

Ответить

Super cool video sir. You are my priority to search about azure after stackoverflow LOL.

Sir, how about the cost ? Is it better using loop or paralel. Coz using loop it makes you have extra running time.

Wow! What Great video, very easy way step by step tutorials and explanations. Well done!

Ответить

Kindly also share how to copy from SQL table to another SQL table without Blob storage or Ingestion table. Thanks

Ответить

Thanks for your all helpful videos, thank you so much. I have one Query

How can we run a pipeline in parallel to copy data from 5 different Sources to respectively 5 different Targets ? 1 is it possible by passing 5-5 different connection strings (source & target string) ? 2nd Can we have a master pipeline wherein a Foreach activity we can call this One pipeline and run it in parallel for 5 different source and targets movement?