Impute missing values using KNNImputer or IterativeImputer

Комментарии:

Thanks for watching! 🙌 Let me know if you have any questions about imputation and I'm happy to answer them! 👇

Ответить

Respected Sir,

Can we multiple imputation in eviews9 for panel data?

is this works for categorical features also ??

Ответить

Thank you!

Ответить

No need to standardise the SibSp and Age columns (e.g. between 0 an 1) before the imputation process? Or is that not relevant here?

Ответить

thank you.

love the clarity in your explanation!

Thank you, this is exactly what I need. Plus you've explained it very well!

Ответить

Can this apply on categorical data? Or for numerical only?

Ответить

Fantastic video !! 👏🏼👏🏼👏🏼 … thank you for spreading the knowledge

Ответить

Thanks, that was very interesting.

Ответить

Hello ! Thank you very much for your interesting video ! Do you know where I can find a video like this one to know how many neighbors choose ?

Thank you very much

Thank you^^

Ответить

Question: If we impute values of a feature based on other features, wouldn't that increase the likelihood of multicollinearity?

Ответить

Hey Kevin, quick question... should k in knn should always be odd... if yes than why and if no than why? as me in the interview... Thank for all your content.

Ответить

Thanks for sharing this!

Why cannot KNN imputer be used for categorical variables? KKN algorithms works with classification problems.

This imputation return an array as the OHE want a dataframe. How can we solve this if we want to put both inside a pipepline?

Ответить

It seems that we should definitely not try it in a large dataset. It takes forever.

Ответить

why don't you have 2M subscribers man ?

Ответить

What is the effect to the dataset after imputation? Any bias or something? I understand it's a mathematical way to insert a valueinto NaN but I feel there must be any effect on this action. Then, when do we need to remove NaN and when do we need to use imputation?

Ответить

Awesome video, couldn't be clearer. Thanks

Ответить

Thanks for posting this. For features where there are missing values, should I be passing in the whole df to impute the missing values, or should I only include features that are correlated with the dependent variable I'm trying to impute?

Ответить

In the example we have only 1 missing so the imputer is having "easy" mission. What if we had not only a few missing per this column/feature and we were facing "randomly" missing values for different col/features. How does the imputer decides to fill : which column first will be imputed and then based upon this filling it will advance to the "next best" (impute handling) column and fill in missing...and so on

Ответить

can iterative imputer and knn imputer works with only numerical values ? Or can it also impute string/alphanumeric values as well?

Ответить

How to use KNN to interpolate time series data?

Ответить

What do I use if the values are catagorical

Ответить

very nice video, however i want to ask, is the knn-imputer can use for data object (string )?

Ответить

Thank you for such an amazing video!

I used <pd.get_dummies(df,columns = ['Employment.Type'], drop_first=True)> to encode my categorical data into numerical one and then ran the KNNImputer but its giving me Error - TypeError: invalid type promotion.

Any insights what might be going wrong?

You are awesome man!! Saved me a lot of time yet again!!!!

Ответить

I really love your videos, they are just right, concise and informative, no unnecessary fluff. Thank you so much for these.

Ответить

How to handle missing categorical variables?

Ответить

Thank you!

Ответить

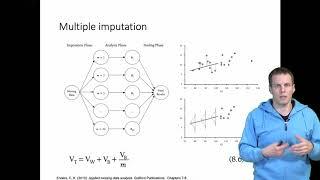

Super helpful, as always. Is IterativeImputer the sklearn version of MICE?

Ответить

I have one doubt ...which is first process missing value impuation or outlier removal?

Ответить

What if the first column has a missing value? T

It is a categorical feature and it would be better if we use multivariate regression.

It has 0 or 1 but if we use KNNimputrr or IterativeImputer, it imputes as float value. I think there's the same question as mine in comments.

I can't think of a realistic example of where KNNImputer is better than IterativeImputer, IterativeImputer seems much more robust.

Am I the only one thinking this?

Hi, I tried encoding my categorical variables (boolean value column) and then running the data through a KNNImputer but instead of getting 1's and 0's I got values inbetween those values, for example 0.4,0.9 etc. Is there anything I am missing, or is there any way to improve the prediction of this imputer ?

Ответить

Kevin, how does it work if let's say B and C are both missing?

Ответить

Kevin, you just expanded my column transformation vocabulary. Thank you.

Ответить

My idea: line plot of cols which have null values with other continuous cols and box plot for discrete and then impute constant value according to result of this process, like say, Pclass is 2, so you impute median fare of Pclass 2 wherever fare is missing and Pclass is 2. Basically similar to iterative imputer, only manual work, slow but maybe better results because of human knowledge about problem statement. What are your thoughts about this idea ?

Ответить

What about the best imputer for categorical variables??

Ответить