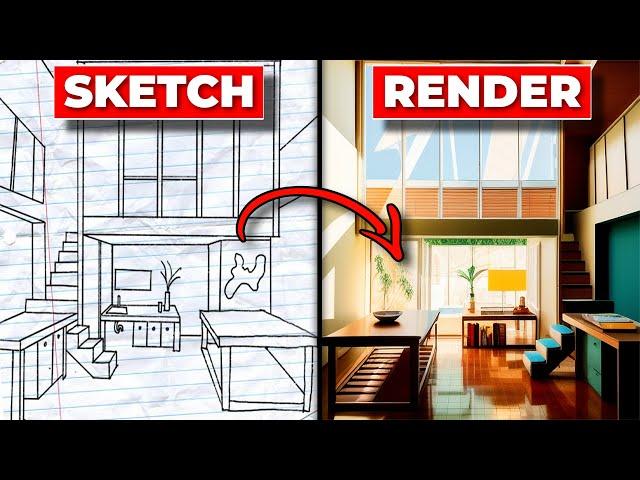

EASILY Create Renders From A Sketch With AI - Stable Diffusion and Controlnet Tutorial

Комментарии:

Could I do the same test but for a buildings facade instead of its interior? Or Is midjourney better for it?

Ответить

Every single one of these "simple" methods is like this: You want to know if its going to rain? Here's what you need to do: take a shovel, raincoat and a fishingpole! Place an alarm clock in your fridge! Now take the next bus to the second city nearby and wait in the woods till friday...

BRUH, I JUST WANT TO KNOW IF ITS GONNA RAIN OR NOT!!!

Thank you

Ответить

Can you do the opposite, turn image into outline sketch?

Ответить

Not working.

NansException: A tensor with all NaNs was produced in Unet. This could be either because there's not enough precision to represent the picture, or because your video card does not support half type. Try setting the "Upcast cross attention layer to float32" option in Settings > Stable Diffusion or using the --no-half commandline argument to fix this. Use --disable-nan-check commandline argument to disable this check.

As someone who understands very little about this topic, this video was an amazing tutorial. Thank you! It should be said though that if your computer doesn't have a Nvidia branded GPU, your computer will not generate images from sketches within a decent amount of time. After following this tutorial and spending about 10 solid hours playing with stable diffusion and researching how to get SD to generate one image in less than 45 minutes, the only solution I can find is that you either have to pay for Google Collab, or update the computer's GPU. If anyone can provide me with information proving this research wrong - I'd love that and be sincerely grateful.

As of now I believe: if you're like me and wanted to use stable diffusion to create architectural renders for free with a basic intel GPU laptop - it won't happen. More likely you'll have to spend your money on Midjourney.

Correction: I fixed the first error. Now I'm receiving the following: 'NansException: A tensor with all NaNs was produced in Unet. This could be either because there's not enough precision to represent the picture, or because your video card does not support half type. Try setting the "Upcast cross attention layer to float32" option in Settings > Stable Diffusion or using the --no-half commandline argument to fix this. Use --disable-nan-check commandline argument to disable this check."

I changed the setting to "upcast cross attention layer to float32" but no luck

Error when tying to run the webui-user. getting this message:

Couldn't launch python

exit code: 9009

stderr: 'python' is not recognized as an internal or external command,

operable program or batch file.

Anyone know what to do?

are you still using ? i succesfuly downloaded but not able to generate any image yet

Ответить

Hello. Thanks for the video. Which models would be good for image to image, e.g. input=empty room -> output=same room with furniture and decoration or input=photo of my living room -> output=redesign of my living room with new furniture and decoration?

Ответить

Good video, but this grid background is absolutely terrible to look at.

Ответить

May I ask why you chose 1.5 over 2 and XL?

Ответить

Super awesome tutorial. Easy to follow. I pasted the Scribble Model into the model's folder, but I don't seem to have any files in there whereas you have a ton of files in there. Also, have it processing an image but it's taking about 20 minutes for one image. Is this correct?

Ответить

It says "RuntimeError: Not enough memory, use lower resolution (max approx. 640x640). Need: 0.4GB free, Have:0.1GB free" when i tried to generate the prompt. Could you tell me what should i do to increase memory?

Ответить

@altarch when I click batch, I get a runtime error not able to use GPU...thoughts?

Ответить

Hello, thanks for the great tutorial, I was wondering if I could download it on my MacBook?

Ответить

Does stable diffusion with control net only work with nvidia?

Ответить

I got through all the steps but unfortunatly when I went to generate some images I got this error message.

FileNotFoundError: [WinError 3] The system cannot find the path specified: ''

Hi, thanks for the video, I watched this and your Midjourney one. So is the essential difference between stable diffusion and midjourney, that when starting from a 3d sketch, stable diffusion will retain the original geometry, spatial parameters and contents (eg. furniture) and render it, where as if you upload the same 3d sketch to midjourney it will use the image as guide and reimagine the spatial parameters and contents? or is there a midjourney prompt that will ask it to retain the spatial parameters of the sketch? thanks

Ответить

🌹

Ответить

THIS... is EXCELLENT !!!! And I mean it 👍👍👍🙂🙂🙂

Ответить

love ur intro and branding

Ответить

Awesome - I tried it and it works . Now what's the easiest way to go back to it after I logged off ? Thank you

Ответить

Amazing Video, thanks man

Ответить

can you also make a lumion + mid journey/stable diffusion workflow video?

Ответить

Hey! I followed all the steps but there's no scribble model option for the model when I upload the sketch. It just says none. Any idea what the issue could be? Thanks for the video

Ответить

Hello, thank you first of all for sharing this. I’m having trouble when in stable diffusion, next to preprocessor the (model) area doesn’t have any options it just says none. Can you help me resolve this

Ответить

Hello there, i tried all the steps one by one and when i lunch the webui-user.bat i get the following message:

Couldn't launch python

exit code: 9009

stderr:

Python konnte nicht gefunden werden. Fⁿhren Sie die Verknⁿpfung ohne Argumente aus, um sie ⁿber den Microsoft Store zu installieren, oder deaktivieren Sie diese Verknⁿpfung unter

Launch unsuccessful. Exiting.

I installed as recomended python 3.10.6

any recomendations?

thanks in advance!

Hey, we're loving what you do here!! we'd like to collaborate with you. Please let us know how we can get in touch.

Ответить

hello, can you please help me?

i get this message at the end..

Stable diffusion model failed to load

Applying attention optimization: Doggettx... done.

what should i do?

I was able to do the downloading steps but got this Error. Can anyone help?

OutOfMemoryError: CUDA out of memory. Tried to allocate 64.00 MiB (GPU 0; 4.00 GiB total capacity; 2.85 GiB already allocated; 0 bytes free; 2.89 GiB reserved in total by PyTorch) If reserved memory is >> allocated memory try setting max_split_size_mb to avoid fragmentation. See documentation for Memory Management and PYTORCH_CUDA_ALLOC_CONF

Time taken: 9.34sTorch active/reserved: 2935/2968 MiB, Sys VRAM: 4096/4096 MiB (100.0%)

Super well explained and organized thank you so much. More architecture related vids would be great

Ответить

Exactly what i was looking for thanks so much

Ответить

Thanks for the tutorial sir 🙏

Ответить

possible with MJ?

Ответить